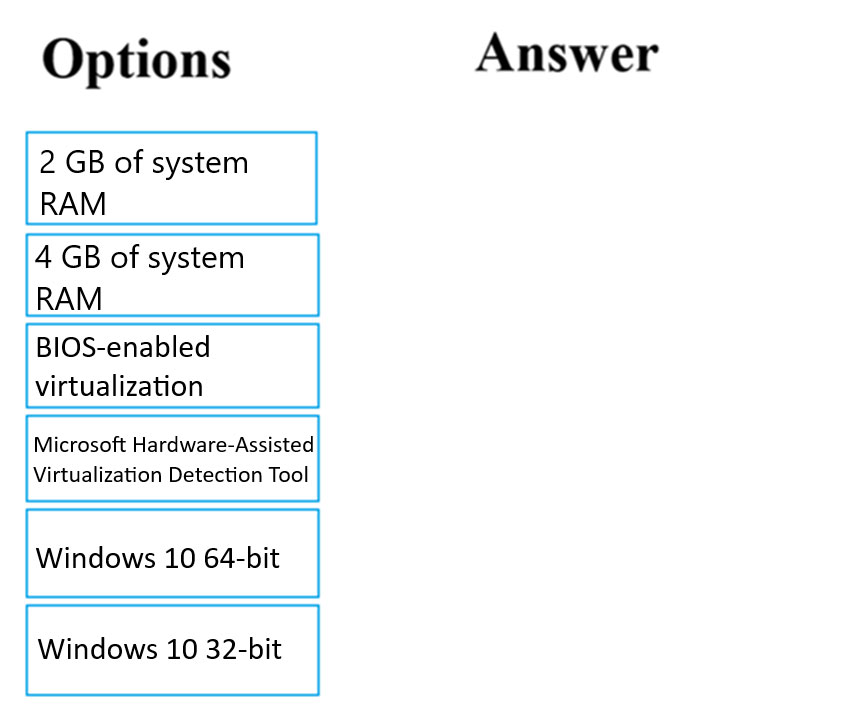

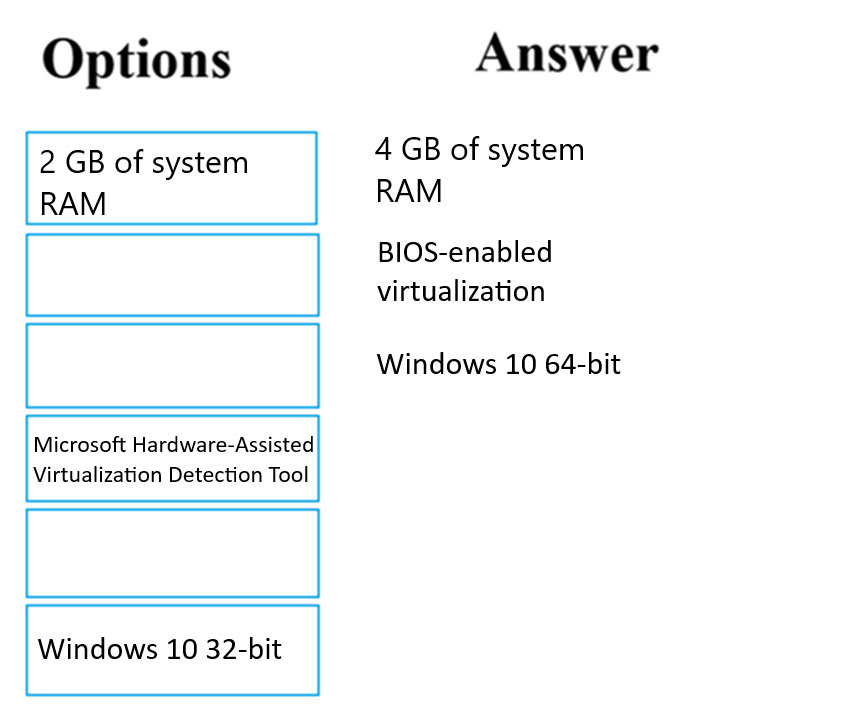

DRAG DROP -

You are planning to host practical training to acquaint staff with Docker for Windows.

Staff devices must support the installation of Docker.

Which of the following are requirements for this installation? Answer by dragging the correct options from the list to the answer area.

Select and Place:

Correct Answer:

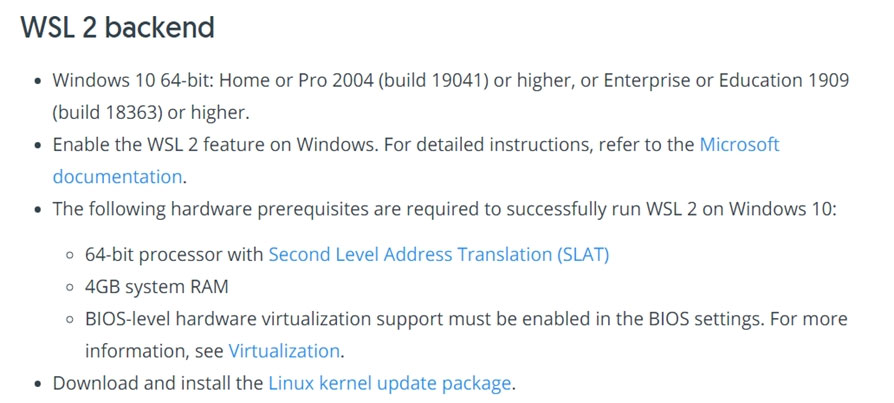

Reference:

https://docs.docker.com/toolbox/toolbox_install_windows/

https://blogs.technet.microsoft.com/canitpro/2015/09/08/step-by-step-enabling-hyper-v-for-use-on-windows-10/ https://docs.docker.com/docker-for-windows/install/

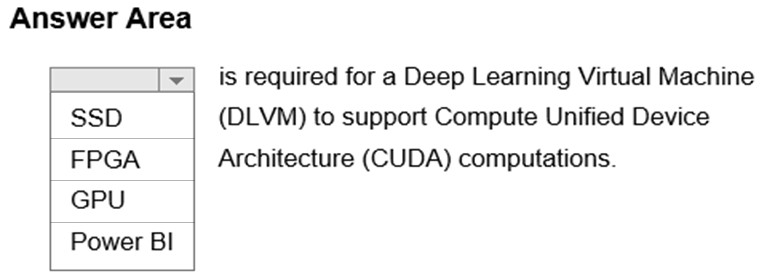

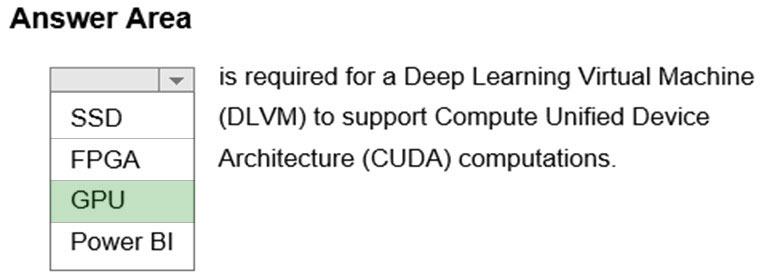

HOTSPOT -

Complete the sentence by selecting the correct option in the answer area.

Hot Area:

Correct Answer:

A Deep Learning Virtual Machine is a pre-configured environment for deep learning using GPU instances.

You need to implement a Data Science Virtual Machine (DSVM) that supports the Caffe2 deep learning framework.

Which of the following DSVM should you create?

Correct Answer:

C

🗳️

This question is included in a number of questions that depicts the identical set-up. However, every question has a distinctive result. Establish if the recommendation satisfies the requirements.

You have been tasked with employing a machine learning model, which makes use of a PostgreSQL database and needs GPU processing, to forecast prices.

You are preparing to create a virtual machine that has the necessary tools built into it.

You need to make use of the correct virtual machine type.

Recommendation: You make use of a Geo AI Data Science Virtual Machine (Geo-DSVM) Windows edition.

Will the requirements be satisfied?

Correct Answer:

B

🗳️

This question is included in a number of questions that depicts the identical set-up. However, every question has a distinctive result. Establish if the recommendation satisfies the requirements.

You have been tasked with employing a machine learning model, which makes use of a PostgreSQL database and needs GPU processing, to forecast prices.

You are preparing to create a virtual machine that has the necessary tools built into it.

You need to make use of the correct virtual machine type.

Recommendation: You make use of a Deep Learning Virtual Machine (DLVM) Windows edition.

Will the requirements be satisfied?

Correct Answer:

A

🗳️

This question is included in a number of questions that depicts the identical set-up. However, every question has a distinctive result. Establish if the recommendation satisfies the requirements.

You have been tasked with employing a machine learning model, which makes use of a PostgreSQL database and needs GPU processing, to forecast prices.

You are preparing to create a virtual machine that has the necessary tools built into it.

You need to make use of the correct virtual machine type.

Recommendation: You make use of a Data Science Virtual Machine (DSVM) Windows edition.

Will the requirements be satisfied?

Correct Answer:

B

🗳️

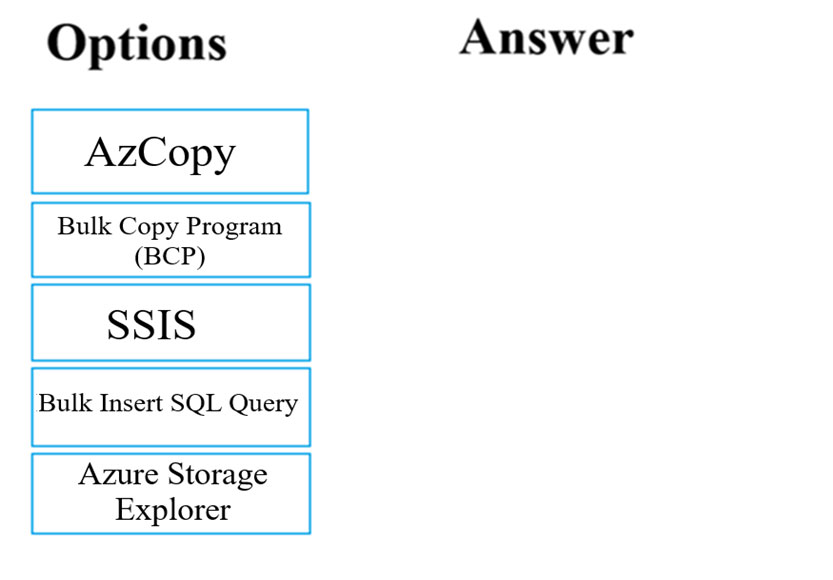

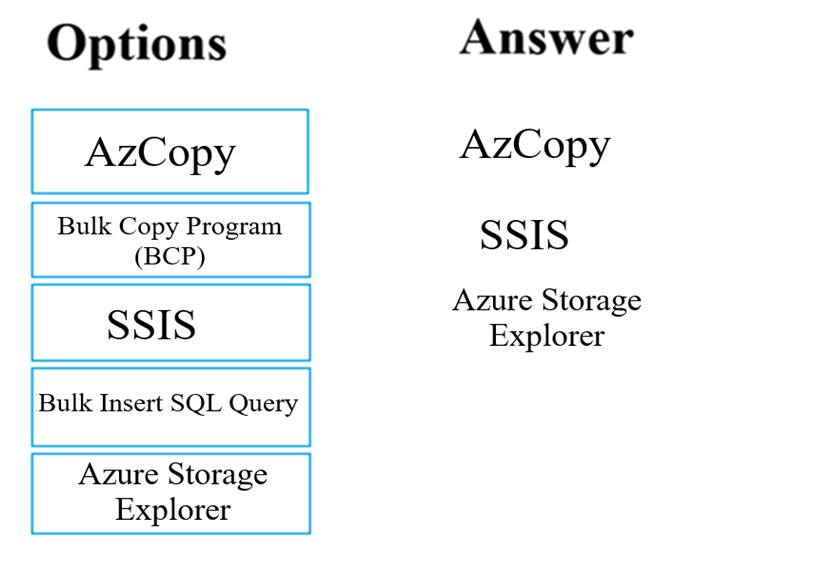

DRAG DROP -

You have been tasked with moving data into Azure Blob Storage for the purpose of supporting Azure Machine Learning.

Which of the following can be used to complete your task? Answer by dragging the correct options from the list to the answer area.

Select and Place:

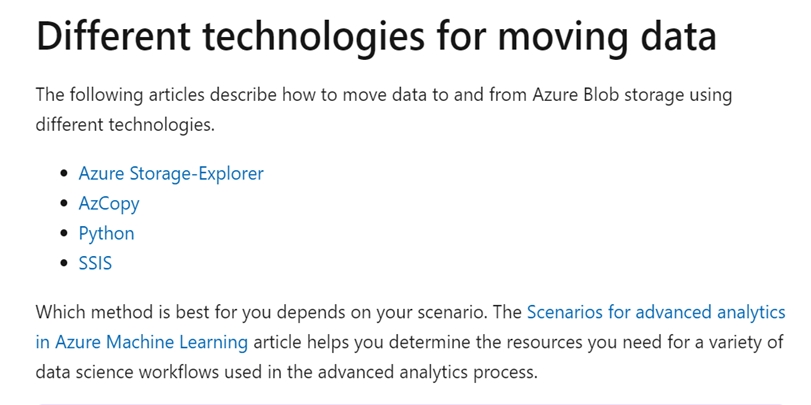

Correct Answer:

You can move data to and from Azure Blob storage using different technologies:

✑ Azure Storage-Explorer

✑ AzCopy

✑ Python

✑ SSIS

Reference:

https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/move-azure-blob

HOTSPOT -

Complete the sentence by selecting the correct option in the answer area.

Hot Area:

Correct Answer:

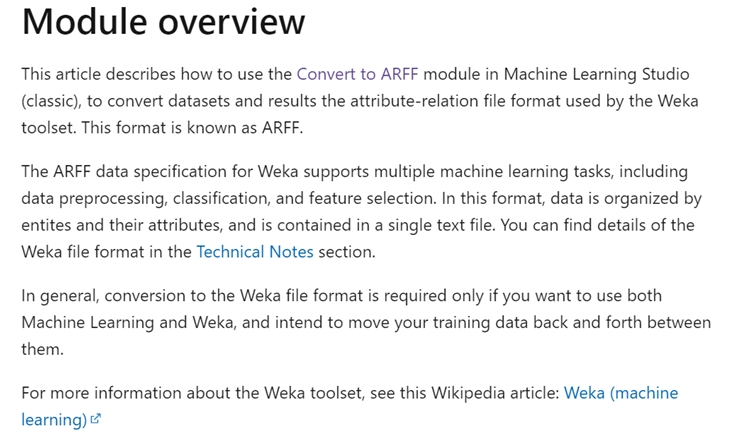

Use the Convert to ARFF module in Azure Machine Learning Studio, to convert datasets and results in Azure Machine Learning to the attribute-relation file format used by the Weka toolset. This format is known as ARFF.

The ARFF data specification for Weka supports multiple machine learning tasks, including data preprocessing, classification, and feature selection. In this format, data is organized by entities and their attributes, and is contained in a single text file.

Reference:

https://docs.microsoft.com/en-us/azure/machine-learning/studio-module-reference/convert-to-arff

You have been tasked with designing a deep learning model, which accommodates the most recent edition of Python, to recognize language.

You have to include a suitable deep learning framework in the Data Science Virtual Machine (DSVM).

Which of the following actions should you take?

Correct Answer:

B

🗳️

This question is included in a number of questions that depicts the identical set-up. However, every question has a distinctive result. Establish if the recommendation satisfies the requirements.

You have been tasked with evaluating your model on a partial data sample via k-fold cross-validation.

You have already configured a k parameter as the number of splits. You now have to configure the k parameter for the cross-validation with the usual value choice.

Recommendation: You configure the use of the value k=3.

Will the requirements be satisfied?

Correct Answer:

B

🗳️